A woman turned to ChatGPT before poisoning 3 men in South Korea, police say - BERITAJA

A woman turned to ChatGPT before poisoning 3 men in South Korea, police say - BERITAJA is one of the most discussed topics today. In this article, you will find a clear explanation, key facts, and the latest updates related to this topic, presented in a concise and easy-to-understand way. Read more news on Beritaja.

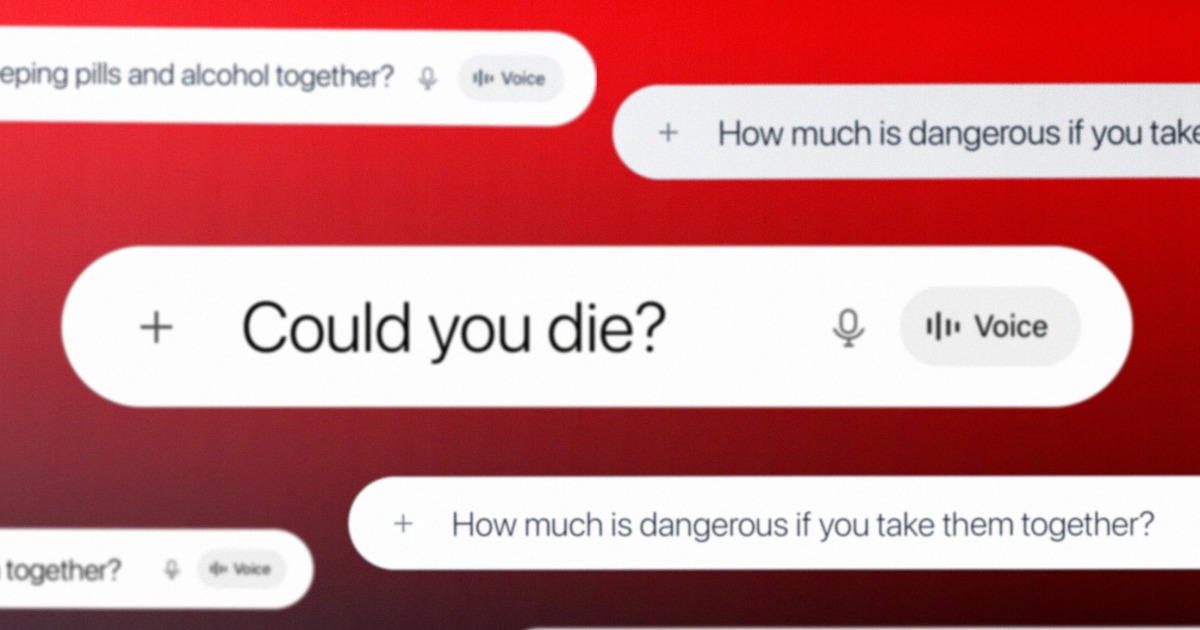

SEOUL, South Korea — “What happens if you return sleeping pills and intoxicant together?”

“How overmuch is vulnerable if you return them together?”

“Could you die?”

Those are the questions constabulary successful South Korea opportunity Kim So-young asked ChatGPT soon earlier she gave 2 men a operation of intoxicant and benzodiazepine, starring to their deaths. Prosecutors allege Kim gave the narcotics to 3 men, 2 of whom died, while the different was injured. Investigators person turned ChatGPT conversations forensically extracted from Kim’s telephone successful an effort to show intent.

“This is not only important arsenic grounds successful itself, but besides because the very truth that conversations pinch ChatGPT are being admitted arsenic nonstop grounds successful a execution lawsuit is highly noteworthy,” Nam Eonho, a elder lawyer astatine the rule patient Vincent and counsel for the family of 1 of the victims, said successful a telephone interview.

“If specified grounds were not admitted, it would beryllium difficult to beryllium the defendant’s intent to kill, which is simply a cardinal constituent of the crime,” Nam said.

Beritaja contacted South Korea’s Supreme Prosecutors’ Office, which oversees the Seoul Central territory Prosecutors’ Office handling the case, for comment. The agency did not instantly respond. Kim has denied immoderate intent to kill, saying successful tribunal that the deaths were accidental. Nam said the chat log grounds contradicts that.

The case, which whitethorn beryllium the first of its benignant successful South Korea. Yet it’s portion of a increasing bid of high-profile criminal cases successful which group are accused of utilizing AI programs to assistance convulsive crimes. Most publically documented cases person progressive ChatGPT, but Google’s Gemini was precocious named successful a civilian suit that alleged the chatbot aided a man who planned to perpetrate a wide casualty arena adjacent Miami’s airport. Experts opportunity usage of specified devices for nefarious intends is apt to accelerate arsenic chatbots go much widespread, arsenic online hunt did erstwhile it debuted. As OpenAI faces respective lawsuits tied to allegations its instrumentality was utilized to transportation retired crimes, the AI manufacture is conscionable opening to grapple pinch its domiciled successful mitigating beingness harms and really to activity pinch rule enforcement.

OpenAI did not respond to questions about the lawsuit aliases really often it refers cases to rule enforcement, including questions about which rule enforcement agencies it whitethorn beryllium moving with. It pointed to a letter written successful response to a shooting successful Canada and a blog post about organization safety.

It is not yet known whether the judge presiding complete Kim’s lawsuit successful South Korea will admit the ChatGPT logs arsenic evidence. The proceedings is ongoing. The lawsuit has drawn important attraction successful the country. Local media reported that a courtroom overflowed pinch journalists and observers astatine the latest hearing, connected May 7.

In February, constabulary arrested Kim connected charges of execution and violating South Korea’s Narcotics Control Act, alleging she gave men toxic drinks containing benzodiazepine and different narcotics successful the guise of a hangover cure. Beginning successful mid-December, Kim sought retired dates pinch men, took them to a motel and past gave them the substance, successful fearfulness of unwanted beingness contact, authorities allege. The first unfortunate survived aft a two-day coma. Authorities said Kim past consulted ChatGPT about dosages and adjusted them earlier she gave them to the 2nd and 3rd victims. The afloat chat logs person not been released and alternatively person been quoted and cited by the police.

Police person wished that the 3rd victim, whose property is represented by Nam, met Kim connected Feb. 9 astatine a motel successful Seoul. She handed him the beverage laced pinch medication, Nam said. After the man collapsed, Nam said, she utilized his telephone to bid nutrient transportation and near pinch it. The constabulary arrived the pursuing day, aft the man had already died. Nam said an autopsy study he had seen concluded he died from supplier poisoning.

“In a sense, the fishy received guidance from ChatGPT and past utilized that accusation arsenic a intends to transportation retired the crime,” Nam said. “This makes the lawsuit unique successful that ChatGPT searches were straight utilized arsenic a instrumentality successful the committee of the offense.”

While the constabulary are besides utilizing societal media posts and CCTV cameras successful summation to the chat log evidence, it is the conversations pinch ChatGPT that whitethorn beryllium captious successful determining whether Kim meant to termination the victims. The adjacent proceedings day is group for June.

Kim’s lawsuit echoes a increasing slate of akin incidents successful North America, wherever the alleged perpetrators utilized ChatGPT to inquire for instructions important to the crime. The systems’ developers person distanced themselves from forbidden actions and the pending ineligible cases successful the U.S. and Canada.

The cases person put unit connected OpenAI.

After an 18-year-old shooter killed 8 group successful Tumbler Ridge, British Columbia, successful February, OpenAI CEO Sam Altman wrote a missive apologizing to the organization for not having informed rule enforcement of the shooter’s account. The perpetrator described scenarios involving weapon unit to ChatGPT for respective days earlier the relationship was banned successful June, 8 months earlier the shooting. The institution did not alert rule enforcement. In April, families of those killed and injured filed 7 national lawsuits against OpenAI, alleging it grounded to return measures that could person prevented the shooting.

“While words could ne'er beryllium enough, I judge an apology is basal to admit the harm and irreversible nonaccomplishment your organization has suffered,” Altman wrote, committing to activity pinch authorities to forestall early crimes.

The fishy successful the shooting astatine Florida State University in April 2025 was successful “constant connection pinch ChatGPT,” the state’s lawyer general, James Uthmeier, said astatine a news conference. The onslaught killed 2 people. Uthmeier launched a criminal investigation to find the domiciled OpenAI’s merchandise played successful the attack. He said ChatGPT “advised the shooter connected what type of weapon to use, connected which ammo went pinch which gun, connected whether aliases not a weapon would beryllium useful successful short range.”

A spokesperson for OpenAI said astatine the clip that “ChatGPT is not responsible for this unspeakable crime,” adding that the responses the chatbot gave “could beryllium recovered broadly crossed nationalist sources connected the internet, and it did not promote aliases beforehand forbidden aliases harmful activity.”

The family of 1 of the victims successful the FSU shooting sued OpenAI connected Sunday.

ChatGPT and generative AI person besides been utilized “to investigation explosives and ignition mechanisms” successful the January 2025 Tesla detonation extracurricular Trump International Hotel Las Vegas, according to Las Vegas police. A North Carolina schoolhouse therapist is alleged to person used ChatGPT to investigation “lethal and incapacitating supplier combinations that could beryllium ingested and injected” to poison her hubby past year. In October, a 17-year-old Florida teen is alleged to person utilized the tool successful an effort to shape his ain kidnapping.

Experts opportunity the admittance of ChatGPT and akin devices successful criminal cases is nascent. Yet location is scarcely a ineligible process it has near undisrupted. Lawyers and victims usage chatbots to build cases, sometimes pinch truthful galore errors that judges prohibition utilizing them successful their courtrooms. Some defendants usage them to expert grounds aliases to telephone genuine grounds into question. Now, an expanding assemblage of casework pointing to utilizing generative AI successful crimes is emerging. For galore successful the field, the cases that are successful the nationalist oculus are only the extremity of the iceberg.

“It’s unsurprising that criminals usage chatbots that are consenting to thief scheme crimes,” said Max Tegmark, a physicist and instrumentality learning interrogator astatine the Massachusetts Institute of Technology and chair of the Future of Life Institute, a nonprofit statement that seeks to trim risks from transformative technologies

“There are less information standards for AI than location are for sandwiches,” Tegmark said. “The evident solution is binding information standards specified that companies can’t merchandise AI systems until they garbage criminal activity.”

Some reason that utilizing a chatbot is not truthful different from a elemental Google search, pinch some producing integer accusation trails showing really criminals planned their actions. But Nam, the lawyer successful the South Korean case, said chatbots create a caller type of scenario.

“The existent problem is that this conversational format whitethorn let imaginable criminals to prosecute successful ‘dialogue’ pinch ChatGPT without a consciousness of guilt,” he said.

“If the fishy had asked a quality being about the dosage aliases management of a toxic substance, that personification would people mobility the intent — why personification would want specified circumstantial accusation about administering poison,” he said. “However, ChatGPT does not select specified questions done ethical judgment.”

As the manufacture begins to grapple pinch the misuse of its technology, it faces akin questions about safeguarding arsenic breakthroughs of the past, specified arsenic spot belts successful cars, moderation connected societal media aliases informing labels connected perchance toxic products.

“We will scope an equilibrium that everyone feels comfortable with,” said Anat Lior, an adjunct professor of rule astatine Drexel University who has studied AI governance and accountability. “We’re conscionable not judge what that balancing enactment looks for illustration yet.”

Subscribe

This article discusses A woman turned to ChatGPT before poisoning 3 men in South Korea, police say - BERITAJA in detail, including key facts, recent developments, and important insights that readers are actively searching for online.